Testbed for Schema Evolution Tools

Author

Contents |

Abstract

"Change is the law of nature ". Confirming to this law of nature, Information systems are highly subjected to continuous changes, including changes in data, schema, applications and queries. Open source software development has also added to the magnitude of changes or evolution. Evolution adds complexity and can have a dramatic impact on Information Systems. However research to solve the problem of schema evolution is still in its infancy. Tools such as PRISM, PRIMA, HMM (Curino et al. 2008) have been recently proposed to assist DBAs in schema evolution. The Web Information Systems (WIS) group at UCLA is in the process of setting up a benchmark for such tools. As part of the effort, a testbed has been prepared to test the efficacy of those schema evolution support tools and others such as Schema Evolution Toolsuite and SQL2SMO Translator and PRISM. The first two tools are also useful in generating input data for PRISM in the form of Evolution Database or Schema Modification Operators (SMOs). PRISM has been tested with Schema Modification Operators (SMOs) and Integrity Constraints Modification Operators (ICMOs) to evaluate its efficiency in assisting in the Graceful Schema Evolution. This page introduces the testbed generation process and various input files used during the testing.

Motivation

Schema Evolution has gained recognition as a practical problem, and it has attracted researcher’s attention. However the research is still in its infancy. Several tools such as PRISM, PRIMA, HMM etc. have been proposed in the past and Web Information Systems group (WIS) at UCLA is in the process of setting up a benchmark for schema evolution. This testbed for testing schema evolution tools is an addition to that effort. It will provide a rich set of test cases to evaluate and provide feedback on the efficiency of such tools. The testbed also helps the beginners getting familiar with the typical changes taking place in any schema evolution and the type of inputs used by various tools to predict the impact of that evolution.

Fundamental Concepts

Information systems are continuously experiencing change with respect to data and schema. These changes are arising due to changing needs, added functionalities, new security and privacy issues and their solutions, compliance to new regulations upcoming every day. Database constructs are added removed and updated every second leading to immense impact on variety of applications and data stores. Even the small number of changes, can invalidate the legacy queries, data entry forms and the applications can crash. Adapting to the change is a costly process because it requires changes in logical and physical organization of data. It is even more time consuming and expensive to change applications, queries, views in order to adapt to the change. With the emergence of web information systems, the problem of evolution is becoming even more severe and complex. Another factor adding to this complexity is the open source software development which is usually a combined effort of many organizations or groups or people. Hence managing evolution in this kind of diverse environment is challenging. Let’s look at some important terms, concepts and definitions that are used in this page.

Schema Evolution- It refers to the process of schema undergoing structural changes in order to satisfy the changing needs. In other words, it can be considered as history of changes made to the schema of the database.

Versions- A version is defined as a stable and coherent state which the administrator or designer desires to keep. Generating a new version of a context is a process which results from human decision. This means that all context modifications do not necessarily generate new versions. [9]

Schema Modification Operators (SMOs)- An SMO is a function that receives as input a relational schema and the underlying database, and produces as output a (modified) version of the input schema and a migrated version of the database. [2]. SMOs represent one or more atomic changes proposed/applied to an input schema. Typical examples of SMOs include CREATE TABLE, COPY TABLE, and RENAME COLUMN etc.

Integrity Constraints Modification Operators (ICMOs)- An ICMO extends the idea of modification operators further to changes pertaining to purely integrity constraints. ICMOs represent atomic changes related to Primary Keys, Foreign Keys, and simple Value Constraints.

Testbed- "The term is used across many disciplines to describe a development environment that is shielded from the hazards of testing in a live or production environment. It is a method of testing a particular module (function, class, or library) in an isolated fashion. May be implemented similar to a sandbox, but not necessarily for the purposes of security. A test bed is used as a proof of concept or when a new module is tested apart from the program/system it will later be added to. A skeleton framework is implemented around the module so that the module behaves as if already part of the larger program. A typical test bed could include software, hardware, and networking components. In software, the hardware and software requirements are known as the testbed, which is also known as the test environment. Test beds are also pages on the Internet where the public is given the opportunity to test CSS or HTML they have created and wanted to see the outcome [11].”

Schema Evolution Tools

PRISM (Panta Rhei Information & Schema Manager) has been designed and developed to bridge the gap between theoretical results and their practical usability, by harvesting recent theoretical advances on schema mapping and query rewriting. PRISM represents the most advanced system supporting relational schema evolution available to date. The system automates a very large portion of the query adaptation work, thus, providing an invaluable operational tool to support DB Administrators (DBAs) in their everyday activities. PRISM addresses the two main challenges of Schema Evolution: predictability of the evolution process and logical independence of the evolution process. It provides a language of Schema Modification Operators (SMO) to express complex changes and allow DBA to evaluate the effect of such changes and also provide optimized translation of old queries to work on the new schema and automatic data migration. [3] Read More

ArchIS supports efficient temporal queries on the archived history of (fixed schema) databases [4].

PRIMA (Panta Rhei Information Management & Archival) introduces the transparent support for complex temporal queries over archives with evolving schema. Both PRISM and PRIMA exploit an intuitive operational language of Schema Modification Operators (SMOs) to explicitly capture the semantics of the schema evolution [4]. Read More

The HMM (Historical Metadata Manager) tool assists in archiving and querying rich metadata histories [4].

The Schema Evolution Toolsuite (SET), a tool developed by Fabrizio Moroni, is a framework to easily automate the benchmarking process. It collects statistical information on schema evolution of a dataset. The data about the evolution of a schema is stored in the MySQL data dictionary i.e. Information Schema is used to store this data. SET queries this data dictionary to gather the statistical measures such as average table/column lifetime, schema size etc.

SQL2SMO Translator is also developed by Fabrizio Moroni and it translates the given patch of SQL statements into corresponding Schema Modification Operators (SMOs) using some pattern matching techniques. It identifies five types of patterns viz. Join Pattern Type1, Join Pattern Type2, Merge Pattern, Partition Pattern, and Decompose Pattern.

Read more about SQL2SMO Translator

Testbed Generation Process

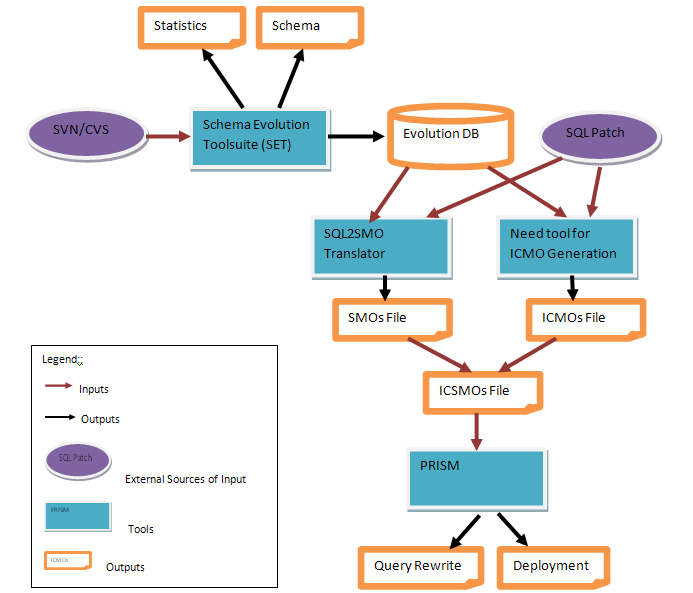

Testbed generation is carried out using the following four steps:

Step 1 Generate Evolution DB: The Schema Evolution Toolsuite (SET) has been used first on the datasets to collect statistical information on schema evolution. The data about the evolution of a schema is stored in the form of meta-data i.e. Information Schema is used to store this data in a MySQL database. The database is named as evolution_db_all. This database also contains evolution database for two other datasets, Typo3 and Coppermine. These datasets, if needed can be used for testing purposes in future. SET also generates different schema versions of a dataset and store them as separate databases. For example the Ensembl schema have undergone 412 revisions, so these revisions will be stored separately as databases on MySQL Server such as ensembl1_345 , ensembl1_346 etc. This step 1 indeed tested the first tool Schema Evolution Toolsuite (SET).

Step 2 Write SQL Patches: The SQL statements leading to an evolution from one version to other are collected together as a patch. These patches are available either with the datasets or the schemas can be manually analyzed to write these patches. For example ensembl_patch_30_to_31 is a patch for Ensembl schema evolution from version 30 to version 31. The SQL statements represent the changes made to the schema from version 30 to 31.

Step 3 Generate SMOs and ICMOs: The SQL2SMO tool has been used to generate SMOs and the tool itself is tested in turn in this step. SQL2SMO translates the patches into corresponding SMOs. By far no tool is available for generating ICMOs so the patches are manually converted into ICMOs for the changes related to integrity constraints. As an example the patch ensembl_patch_30_to_31 is translated by the SQL2SMO tool into a set of SMOs ensembl_SMO_30_to_31. Also the ICMOs have been written after analyzing the patch for integrity changes as ensembl_ICMO_30_to_31 and finally the set of modification operators is combined to form the ICSMOs (Integrity Constraints & Schema Modification Operators) named as ensembl_ICSMO_30_to_31.

Step 4 Predict and Evaluate the Effect of Proposed Changes: In order to analyze the effect of proposed changes the schema evolution tool PRISM has been used. This step indeed tests PRISM. The SMOs are extracted from already evolved schemas. So by feeding the SMOs and ICMOs for a particular schema version, the effect of proposed changes is viewed in the form of a new schema and the legacy queries have been automatically rewritten for the new schema using PRISM. PRISM is tested to see if it generates the expected new schema. For example ensembl_ICSMO_30_to_31 is provided as an input to PRISM, it predicted the new schema version 31 and this newly generated schema is compared against already existing schema version 31 to see if the prediction made by PRISM is accurate.

Figure 1: Testbed Generation Process

Figure 1: Testbed Generation Process

Test Cases

Test cases for testing SET with different datasets

Test cases for testing SQL2SMO Translator with different SQL Patches

Test cases for testing PRISM with different SMOs and ICMOs

Histories

| Overview | Information on Different Directories | Look Inside |

|---|---|---|

| Three datasets namely ENSEMBL, WIKIPEDIA, and XOOPS have been analyzed to extract SMOs and ICMOs. You can also find various schema versions for these datasets at SchemaSet1 or SchemaSet2 . Different schema versions have been analysed to write the SQL statements that have led to that evolution. Those SQL patches are further converted to SMOs or ICMOs or full ICSMOs that can be used as an input to the tool PRISM to predict future schemas and automatic query rewriting. These files are named using the following naming convention:

DB_FILETYPE_VERSIONFROM_TO_VERSIONTO For example files like ensembl_patch_31_to_32, ensembl_SMO_31_to_32, ensembl_ICMO_31_to_32, ensembl_ICSMO_31_to_32 contain the SQL patch , SMOs, ICMOs, full ICSMO respectively for the Ensembl schema evolution from version 31 to 32. Special Case: Synthetic ICMOs for Ensembl DB Synthetic ICMOs have been created to test PRISM for all possible constraint changes as the actual changes in terms of Integrity Constraint in Ensebml DB,were not enough for testing purposes. Three ICMOs sets have been created: Ensembl_ICMO_338_to_339_Test_all: Contains some valid/invalid ICMOs. Ensembl_ICMO_338_to_339_Test_Corrected: Contains only valid ICMOs. Ensembl_ICMO_339_to_340_Test: Contains only valid ICMOs. Ensembl_ICMO_340_to_341_Test: Contains only valid ICMOs. Database versions ensembl1_338 ia part of the actual dataset, and is in the database.Other versions like ensembl_339_Test,ensembl_340_Test are synthetic schema evolution versions. Separate scripts for creating these databases are included in the directory. |

An Example

ENSEMBL_SMOs_SQL2SMO: It contains SMOs for Ensembl dataset created using SQL2SMO tool. Ensembl_Fkeys: It contains Foreign Key relationships for various tables in different releases. Though they say these constraints are implemented at application level, not at the database level. Ensembl_ICMOs: It contains ICMOs for the Ensembl dataset. Ensembl_ICSMOs: It contains complete set of changes(SMOs and ICMOs) that lead to an evolution from one version to other for the Ensembl dataset. Ensembl_Inverse_SMOs: It contains Inverse SMOs for the Ensembl dataset. Ensembl_Patches_Full:It contains complete set of SQL statements that lead to an evolution from one version to other for the Ensembl dataset. For example add a column, drop an index, drop a FK etc. Ensembl_Patches_ICMOs: It contains set of SQL statements related to Integrity Constraint (IC) changes only. For example add a PK, drop a FK etc. Ensembl_Patches_SQL2SMO: It contains set of SQL statements related to Schema Modification (SM) changes only. For example add a column, drop a column etc. Ensembl_Schemas: It contains differnt versions of the schema. Ensembl_Synthetic_ICMOs: Contains synthetic patches,ICMOs and corresponding synthetic schema versions created for testing purposes. ensembl_revisions_analysis: Excel file contains revision analysis for Ensembl dataset. |

ENSEMBL |

Know More

| Useful Scripts and Links | SMOs and ICMOs Syntax |

|---|---|

| Scripts to Create Evolution Databases for Different Datasets

Evolution DB for Wikipedia and Ensembl Evolution DB for Wikipedia, Ensembl, Xoops, Coppermine and Typo3 These databases are already available in a MYSQL server at wisgate.cs.ucla.edu. The login information can be obtained by email.

|

SMOs and ICMOs(i.e. ICSMOs):

1.ADD COLUMN < col > < type > AS < const/funct > INTO < tab >; 2.DROP COLUMN < col > FROM < tab >; 3.CREATE TABLE < tab > (< col1 > < type1 >,< col2 > < type2 >); 4.DROP TABLE < tab >; 5.RENAME TABLE < tab1 > INTO < tab2 >; 6.RENAME COLUMN < col1 > IN < tab1 > TO < col2 >; 7.COPY TABLE < tab1 > INTO < tab2 >; 8.JOIN TABLE < tab1 >, < tab2 > INTO < tab3 > WHERE < cond >; 9.DECOMPOSE TABLE < tab1 > INTO < tab2(col1,col2) >, < tab3(col1,col3) >; 10.PARTITION TABLE < tab1 > INTO < tab2 > WITH < cond1 >, < tab3 > WITH < cond2 >; 11.MERGE TABLE < tab1 >, < tab2 > INTO < tab3 >; 12.NOP; 13.ALTER TABLE < tab > ADD PRIMARY KEY < id > (col1,col2) CHECK; 14.ALTER TABLE < tab > ADD PRIMARY KEY < id > (col1,col2) ENFORCE; 15.ALTER TABLE < tab > ADD PRIMARY KEY < id > (col1,col2) DECLARE; 16.ALTER TABLE < tab > ADD FOREGN KEY < id > (col1,col2) REFERENCES < tab2 >(col3,col4) CHECK; 17.ALTER TABLE < tab > ADD FOREGN KEY < id > (col1,col2) REFERENCES < tab2 >(col3,col4) ENFORCE; 18.ALTER TABLE < tab > ADD FOREGN KEY < id > (col1,col2) REFERENCES < tab2 >(col3,col4) DECLARE; 19.ALTER TABLE < tab > ADD VALUE CONSTRAINT < id > < cond > CHECK; 20.ALTER TABLE < tab > ADD VALUE CONSTRAINT < id > < cond > ENFORCE; 21.ALTER TABLE < tab > ADD VALUE CONSTRAINT < id > < cond > DECLARE; 22.ALTER TABLE < tab > DROP PRIMARY KEY < id >; 23.ALTER TABLE < tab > DROP FOREIGN KEY < id >; 24.ALTER TABLE < tab > DROP VALUE CONSTRAINT < id >; |

References

[1] Carlo A. Curino, Hyun J. Moon, Letizia Tanca, Carlo Zaniolo. “Schema Evolution in Wikipedia: toward a Web Information System Benchmark”, International Conference on Enterprise Information Systems (ICEIS) 2008.

[2] Carlo A. Curino, Hyun J. Moon, and Carlo Zaniolo. "Graceful database schema evolution: the PRISM workbench". VLDB, 2008.

[3] Hyun J. Moon, Carlo A. Curino, Alin Deutsch, Chien-Yi Hou, and Carlo Zaniolo. "Managing and querying transaction-time databases under schema evolution". VLDB, 2008.

[4] C. Curino, H. J. Moon, and C. Zaniolo. “Managing the history of metadata in support for db archiving and schema evolution”. ECDM, 2008.

[5] C. Curino, H. J. Moon, MyungWon Ham, and C. Zaniolo. “The PRISM Workwench: Database Schema Evolution Without Tears”. ICDE, 2009.

[6] Moon, H.J., Curino, C.A., Deutsch, A., Hou, C.Y., Zaniolo, C. “ Managing and querying transaction-time databases under schema evolution”. VLDB, 2008.

[7] Wang, F., Zaniolo, C. “ An XML-Based Approach to Publishing and Querying the History of Databases. World Wide Web”. Web Information Systems Engineering, 2005.

[8] Wang, F., Zaniolo, C., Zhou, X. “Archis: An xml-based approach to transaction time temporal database systems”. VLDB Journal, 2008.

[9] José Andany , Michel Léonard , Carole Palisser. “Management Of Schema Evolution In Databases”. Proceedings of the 17th International Conference on Very Large Data Bases, p.161-170, September 03-06, 1991.

[10] Moroni Fabrizio, Chapter 3, “Schema Evolution Toolsuite: analysis and interpretation of relational schema changes”. Master’s Thesis, 2008/2009.

[12] http://www.mediawiki.org/wiki/MediaWiki

[13] http://yellowstone.cs.ucla.edu/schema-evolution/index.php/Benchmark_home